I recently watched Apple October "Special Event" on October 30th, 2018 wherein they introduced an updated Macbook Air, Mac Mini, and new iPad's. While I have my own thoughts regarding the perhaps misaligned "fit" of the new Air and Mac Mini, I did find the new iPad's rather interesting. More and more, Apple is positioning the iPad as a computer. Disregarding their previous ad campaigns (which were arguably poor), Apple is consistently blurring the lines between iOS and macOS. While I do have some reservations about this, Apple is making an interesting stance towards the future of precision input.

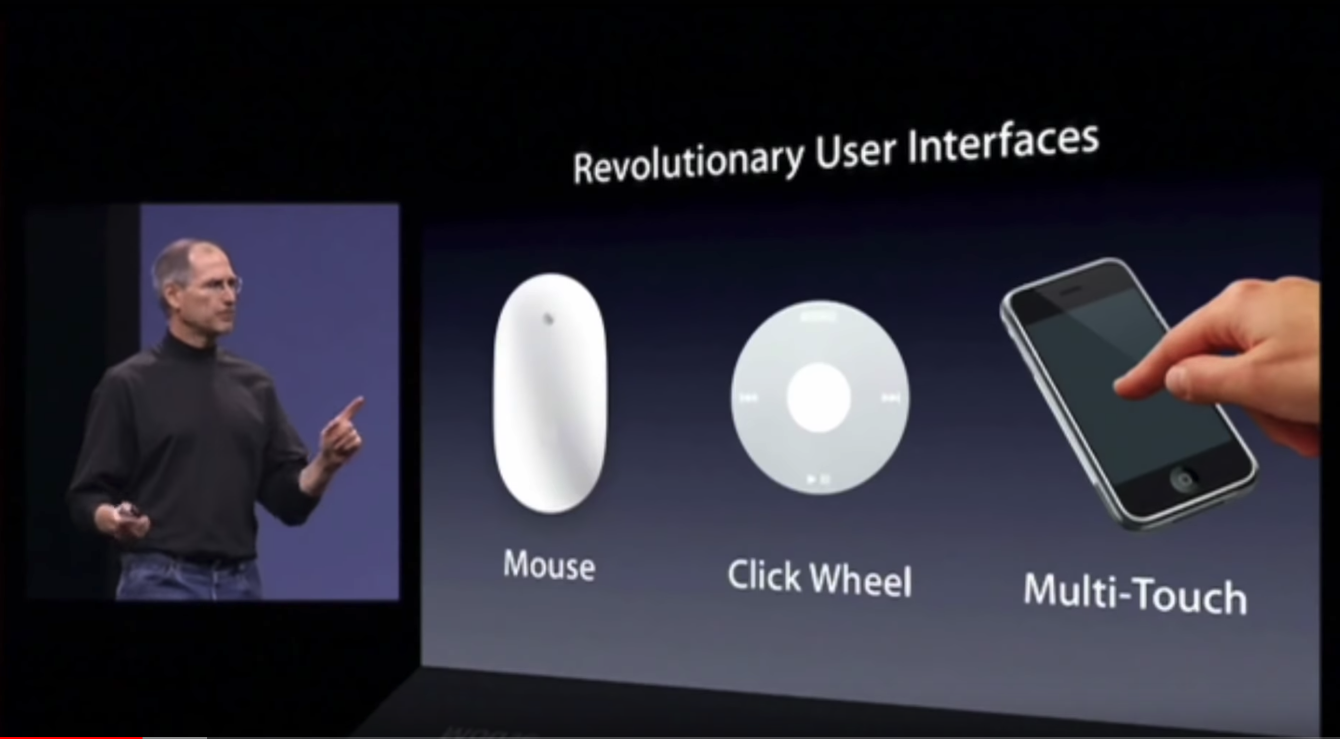

First was the mouse. The second was the click wheel. And now, we're going to bring multi-touch to the market. And each of these revolutionary interfaces has made possible a revolutionary product - the Mac, the iPod and now the iPhone.

This quote from Steve Jobs back during the introduction of the first iPhone is a glimpse into how Apple has thought about input devices over the last decade or so. While the click wheel was a very niche use case for input (scrolling menus), multi-touch (or touch in general) can replace much of what a mouse can do. Although touch-input has always been limited by desktop-class applications built to use precision inputs (like a mouse).

Enabling touch input on desktop

Apple has consistently held out on putting a touch screen into its Mac lineup. While they undoubtedly have the technology to do it, they've purposefully strayed away from it. Why force macOS to support touch input when iOS already does?

It's an easy argument - as Apple seeks to combine macOS and iOS further, they're making a decision towards the primary forms of input that should support these. Additionally, one could argue that "dumbing down" full scale desktop-class applications to support a secondary input form (touch) isn't nearly as efficient as building native touch-designed applications from the ground up. Think about it:

- Desktop (macOS) = Mouse/ Trackpad

- Mobile (iOS) = Multi-touch/ Pen

So in the future of computing, which approach is more future proof?

The Microsoft Approach

The Microsoft approach is to let you choose. They don't force developers to make their applications touch-friendly, but they definitely encourage it. If you know that a growing proportion of your user base is using devices equipped with touchscreens, or just tablets all together, you'd be doing yourself a disservice by not enabling at least some rudimentary form of touch input. After all, Microsoft has a whole suite of touch-enabled devices with the Surface line, so they're leading the charge to touchify their OS.

An important note here: this isn't about making applications useable on mobile (think small screens), it's about making them functional with a different form of input. Since Microsoft doesn't have a huge stake in a mobile OS (not counting Windows Mobile/ Windows Phones), it makes sense that they are more incentivized to optimize Windows 10, rather than start from the group up with say Android.

The Apple Approach

Apple is continuing to make the argument through product announcements that touch/ pen input is the future, and iOS is becoming increasingly more capable at replacing a desktop environment. The newer generations of iPad's even include an app dock, wholly reminiscent of its macOS counterpart. There's also assisted multitasking and the introduction of true interoperability between devices with the use of USB C. One missing piece is still a usable file browser, but perhaps Apple is purposefully leaving this out. This short list scratches the surface on the differences between these OS's, but I'll leave the rest for another day.

iOS apps are approaching their desktop counterparts

The most interesting part of the demo that I saw from Apple was what appeared to be a full-featured Photoshop running on the iPad Pro. The demo-er even explained that the PSD she was working with was over 3GB in size and included more than 100 individual layers. As someone who has used Photoshop quite a bit in my first few years at university (and to a lesser extent when working on slides/ presentations at work), I can attest that this is an impressive feat. I wonder what the next generation of creative professionals will create (or feel empowered to create) with this level of technology-enablement.

iPad Pro vs Surface (Pro, Studio, etc)

If you look at Apple and Microsoft's "flagship" devices, they both are billed as "laptop replacements". While PCMag (and myself) give Microsoft Surface the edge in this argument, this is strictly because it runs a full version of Windows which enables many of the workflows end users are familiar with. In fact, when you study both devices and their snap-on keyboards, you'll see that Apple's is missing a trackpad. Perhaps 'missing' isn't the right word, but when an iPad runs iOS and is wholly designed for touch-input, you simply don't need a trackpad.

And I suppose that's the crux of this argument. Apple has made a bet the the future of computing won't involve a mouse at all, and as the lines between iOS and macOS merge (not to mention Apple seemingly moving away from Intel chips), I think we'll start to see a fundamental shift in how consumer devices think about input.

How ironic that in 2007 Steve Jobs so vehemently spoke out against a stylus, and yet here we are in 2018 with Apple introducing their second-gen "pencil" stylus for iPad's. And while I believe Microsoft still has the edge right now in terms of functionality on their portable lineup, I would not be surprised if the next 10 years of computers show us that future doesn't involve a mouse anymore.